Crowdsourcing Multi-label Audio Annotation Tasks with Citizen Scientists

Cartwright, M., Dove, G., Mendez, A.E.M., Bello, J.P., Nov, O. Crowdsourcing Multi-label Audio Annotation Tasks with Citizen Scientists. In Proceedings of ACM Conference on Human Factors in Computing Systems (CHI), 2019.

Abstract

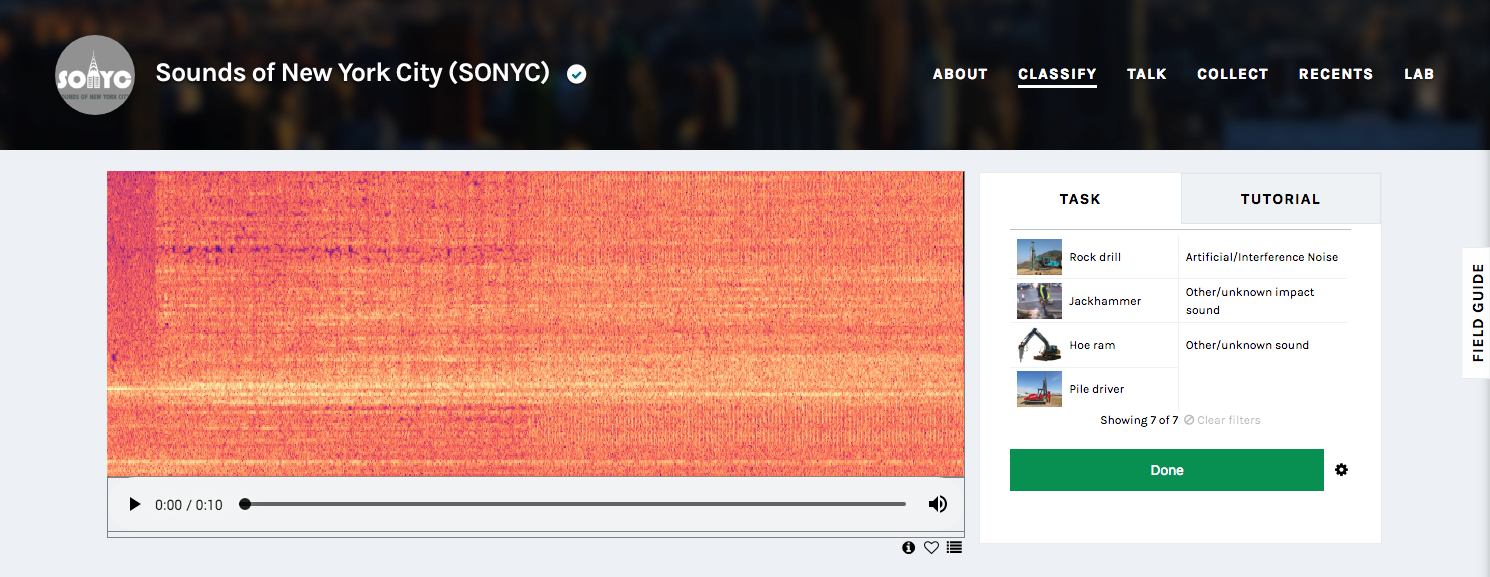

Annotating rich audio data is an essential aspect of training and evaluating machine listening systems. We approach this task in the context of temporally-complex urban soundscapes, which require multiple labels to identify overlapping sound sources. Typically this work is crowdsourced, and previous studies have shown that workers can quickly label audio with binary annotation for single classes. However, this approach can be difcult to scale when multiple passes with diferent focus classes are required to annotate data with multiple labels. In citizen science, where tasks are often image-based, annotation eforts typically label multiple classes simultaneously in a single pass. This paper describes our data collection on the Zooniverse citizen science platform, comparing the efciencies of diferent audio annotation strategies. We compared multiple-pass binary annotation, single-pass multi-label annotation, and a hybrid approach: hierarchical multi-pass multi-label annotation. We discuss our fndings, which support using multi-label annotation, with reference to volunteer citizen scientists’ motivations.